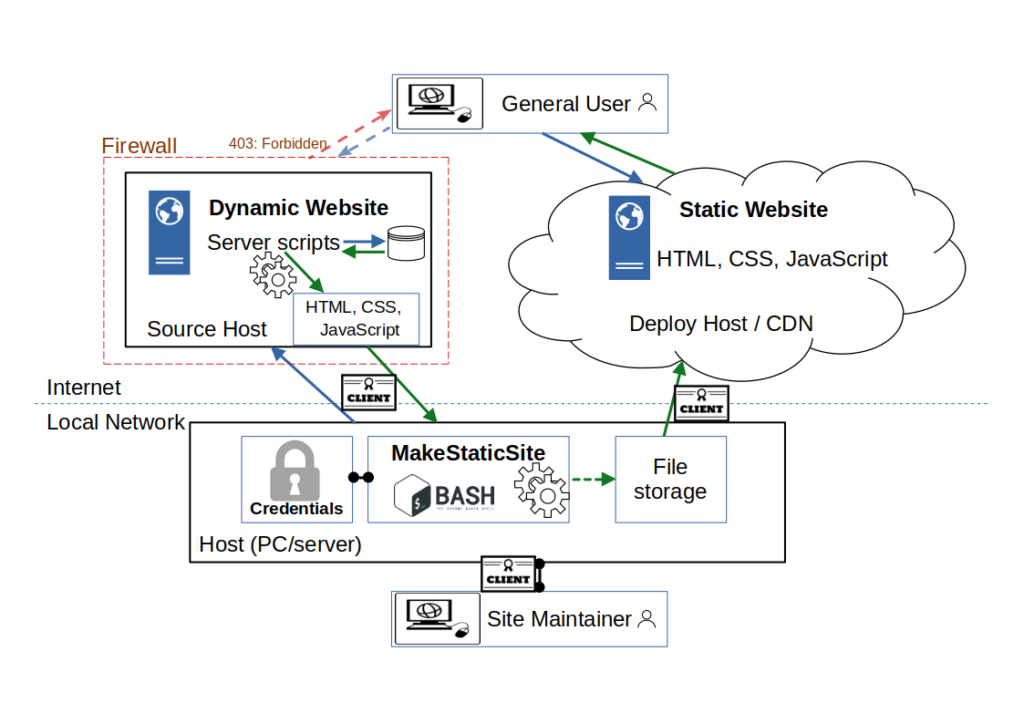

MakeStaticSite’s network architecture consists of three spheres of activity: the source host, where the website is created and maintained; the host for MakeStaticSite, where the software is installed and run; and the host where the static version is deployed. These may be variously located on one’s own computer, an Intranet or the Internet, separately or together. Hence, there are many permutations, some of which we indicate below.

1. Network architecture (simplified)

We start with a simplified architecture, as may be expected in standard usage.

In this simplified view, there are two main users: site maintainer maintains the web site and builds & deploys the static version to the deploy host. The general user accesses, but doesn’t edit, the website, i.e. is the site consumer.

In this scenario, web content is managed on the Internet, as usual, except that it is now subject to access restrictions, effectively imposing a firewall. In other respects, authoring content remains the same, but a further step is required for publication, involving the use of MakeStaticSite. This step is referred to as deployment and can be any server that supports certain networking protocols.

MakeStaticSite itself is hosted on a local network, which can be a personally owned machine or an organisation’s Intranet, generally anywhere that sits behind a router and does not allow incoming network traffic.

For security and performance reasons, it is envisaged that the entire site is deployed on the Internet as a static version on a content distribution network (CDN), generally separate from the source host, typically in the Cloud.

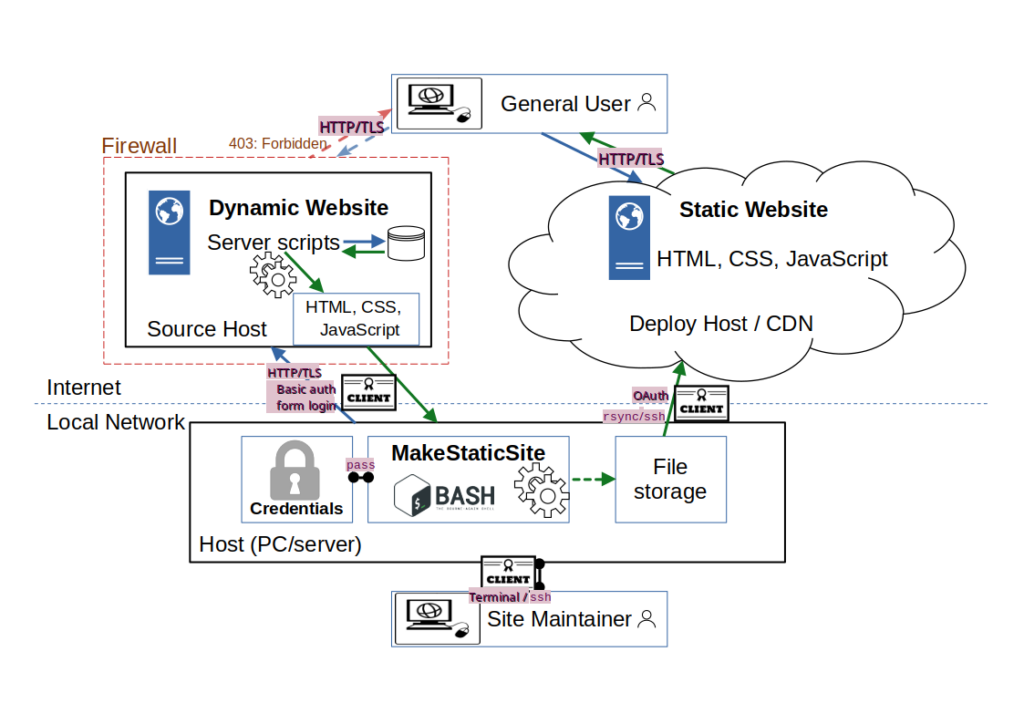

2. Network architecture: local install

This is the same arrangement depicted in more detail, emphasizing how MakeStaticSite coordinates activity from its local host while the network is secured through certificates and credentials management.

Without taking particular measures, content management systems such as WordPress are generally open to a wide range of attacks, from brute force logins to injection attacks that install back doors. By closing access to these ‘attack surfaces’, security is greatly enhanced, though it can never be 100%. The methods currently available to MakeStaticSite are HTTP basic authentication under SSL and access restricted by web form logins. They are elementary but effective, especially when combined.

Even on one’s own computer, it is good practice to reduce exposure of credentials as far as possible. So, MakeStaticSite supports the use of pass for encrypting and managing credentials, kept separate from the application. Mirrors are created and stored locally before being copied remotely. In this instance, the file store is the local machine, but future versions should support the use of Git repositories, such as GitHub.

3. Network architecture: local install 2

We add to Figure 2 the main network protocols in use: the site maintainer supplies basic authentication credentials to access the HTTP server and then the web login details to edit content on the site, with web transfers secured by HTTP over TLS.

Logins to terminal sessions on the host (PC/server) may be various secured by username and password, fingerprints, and so on, according to local policy.

Deployment can be rsync over ssh, or via Oauth tokens, though currently only Netlify is explicitly supported.

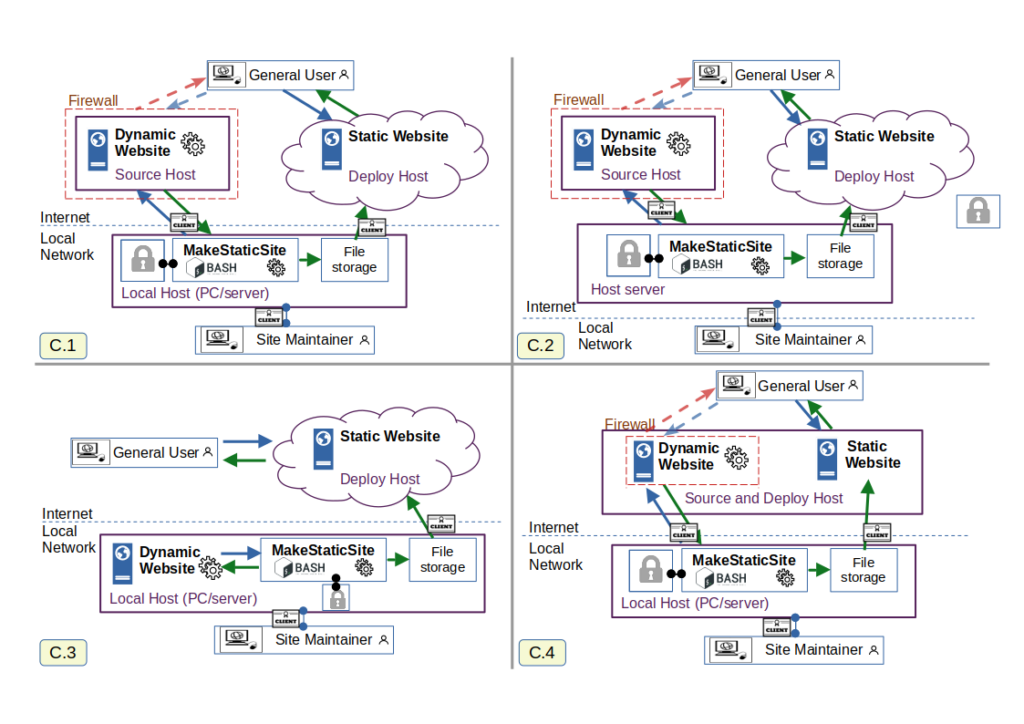

4. MakeStaticSite Network Architecture: Configuration Choices

So far, discussion has been with respect to just one possible configuration. In Figure 4, it is joined by three others.

C2: MakeStaticSite can be set up to be accessed anywhere where there is an Internet connection; the standard means is to use ssh to start a terminal session.

C3: For an individual, especially developer, there is no need to use third parties for hosting the website under construction. This can all be done locally, as is the case for this website. Its only Internet presence is the end result, deployment to Netlify under https://makestaticsite.sh (sic). The downside of this arrangement, is that special arrangements need to be made to allow others access to edit content, so this configuration is not suitable for site co-creation.

C4: This arrangement sees content creation and deployment on the same host, though they can be kept virtually separate by varying access restrictions on virtual hosts. It can work even on budget shared hosting accounts where the web server is general purpose and not a CDN; even though it supports scripts, the fact that the content is static, means that security issues are greatly reduced.

There are many other possible permutations; with gigabit bandwidth to people’s homes, it is possible to combine all three spheres of activity on a single host with an ISP or even on one’s own personal machine. The choice of configuration will be determined by many factors such as convenience of access, the number of users involved, data handling requirements and cost. Some of these considerations concern choices in source and destination, and others the extent to which services should work online and/or offline.

Whatever the choice, you are generally not tied down to a particular host provider. In almost all arrangements, you have access to the data and can make complete copies on a regular basis, i.e. you retain control.

5. MakeStaticSite Architecture: Inputs and Outputs

MakeStaticSite’s internal architecture is quite distinct from that of most other static site generators as it is not about assembling and instantiating templates, but making faithful copies and refining them for live publication.

The work is coordinated by two scripts, setup.sh and makestaticsite.sh, both of which query extensively the source host, the primary input. Not shown here is the user interaction during setup, which leads to the creation of the site’s configuration (.cfg) file. This is then supplied as an input to makestaticsite.sh, which has the task of generating the mirror. Running Wget should account for the majority of a site, but there are often missing components that the crawler cannot reach — hence the Extras folder. On the other hand, some resources that are crawled (such as login pages) are not needed and should be replaced — hence the Snippets function.

The process is iterative and conducted in phases, described in the section on workflow.